If you’ve started tracking your monthly AI expenses, you’ve likely noticed token usage quietly driving up costs. The term “AI token management” refers to overseeing every word or chunk of data your automated workflows send to — and receive from — cloud-based large language models. For Kansas businesses and local shops, these costs can scale faster than expected.

Tokens add up every time a prompt, document, or tool request is fed into an AI agent. Unlike the old days of fixed software licenses, each interaction — whether it’s a sales chatbot, automated PDF processor, or scheduling assistant — quietly tallies more tokens. The bigger the job and the more complex the workflow, the more you’ll pay.

Even routine team automation can balloon to thousands of dollars in overage if left unchecked — a cost that hits local operators harder than big tech firms.

Recent headlines about AI budgets going off the rails aren't just Silicon Valley drama. As reported by TechCrunch, major providers are pushing enterprise AI pricing upwards, making efficiency a must-have for small business survival.

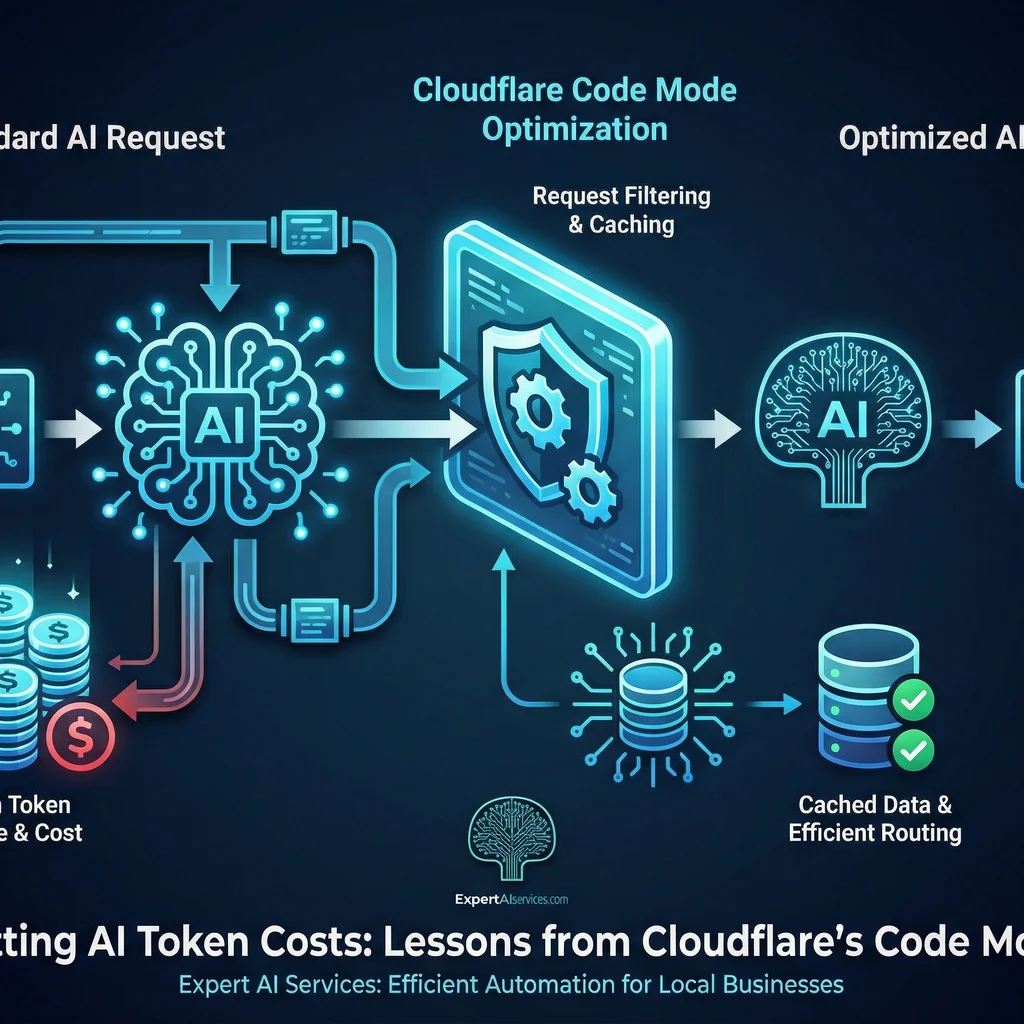

Cloudflare’s Code Mode, part of their new Model Context Protocol (MCP) initiative, proposes a smarter way for AI agents to access business tools. Instead of sending bloated prompts or exhaustive API specs to the AI model, Code Mode compresses thousands of potential interactions into just two: search and execute. The result? You can reduce AI token costs by several orders of magnitude — with some teams reporting a 99.9% cut in token use.

Code Mode consolidates vast API catalogs into a simple code-first bridge. AI agents write compact, typed code — not verbose natural language — to get things done efficiently.

This matters for small operations because the days of affordable, unlimited AI access are over. As the Cloudflare's Code Mode announcement details, running lean AI workflows isn’t just for global IT giants; it’s now table stakes for Main Street businesses aiming to stay competitive.

Let’s break down how Cloudflare’s Code Mode works in a practical, small-business context.

// Standard AI tool call (old way)

"Use the /getCustomer endpoint to lookup Jane Doe’s account… here are 300 API endpoints: ..."

// Code Mode

"search('customer', 'Jane Doe')"

"execute('order_status', {'order_id': 1234})"As highlighted in Model Context Protocol documentation, this code-first approach isn’t just about speed: it’s about future-proofing workflows, keeping costs predictable, and setting up for easier vendor swaps down the road.

Here’s how any Kansas business — from HVAC to contracting to local manufacturing — can put Code Mode principles to work:

search and execute commands — ask your AI partner about Model Context Protocol compatibility.Pro tip: Tool consolidation isn’t just about cost. It makes automations easier to maintain, debug, and secure — and you’re less likely to get locked into one vendor or platform.

Despite the Code Mode example, many businesses still get tripped up. Watch out for these issues:

Simple prompting isn't always efficient: bulk, unedited prompts rack up huge, silent costs.

Instead, set a checklist: Add hybrid prompts (mixing code-first and natural language), monitor token logs, and periodically review your automation pipeline for inefficiencies.

So, what are the savings? As cited in the Cloudflare Code Mode announcement, you could see a 99.9% reduction in token use for complex workflows: shrinking from 1.17 million tokens to just around 1,000 per process.

While exact cost per token depends on your LLM provider, the difference — especially at scale — adds up quickly. Consider this:

The path to cost-effective, future-proof AI is fewer moving parts, code-first tool calls, and regular token audits — not just chasing the cheapest provider.

The landscape for AI token management is changing fast. By following lessons from Cloudflare’s Code Mode and the Model Context Protocol ecosystem, businesses can reduce AI token costs while building processes that are easier to maintain, swap, and secure.

Embracing these best practices means less wasted budget, fewer headaches, and more time spent on productive work — not firefighting automation issues.

The Kansas-first approach at Expert AI Services is “AI simplifies, it doesn’t replace.” When you’re ready to streamline, check out how applied AI works in the field with real communication tools — like SMSai — or start with an AI project readiness review.

Ready to cut costs, untangle complex workflows, and make AI truly work for your business? Talk with an AI integration lead who understands local industry realities and open source best practices.

Difficulty Level

Intermediate

Action Item

Audit and streamline your business's largest AI workflows to avoid inflated token costs, then implement code-first API calls using open standards like MCP.

Tools Mentioned

Cloudflare Code Mode, Model Context Protocol, OpenAI, Anthropic, SMSai

Time to Implement

1-2 hours for audit, ongoing improvements as workflows evolve